My Header

Optical Flowmap Generator / Atlas Tool

Motivation

When I was digging into VFX more and more, I wasn't satisfied with scripts and mostly half-manual solutions for handling texture atlases for flipbooks. It took too much time to create, re-export and test flipbooks for effects I was creating and that's about the time, when the idea of own flipbook/atlas generator was born. Watching closely the development of Star Citizen, I was greatly impressed with the results presented in dev blog #100 Around the Verse showcasing use of Optical Flow Distortion Maps. After some digging I found Klemen Lozar's post about Frame Blending with Motion Vectors. Around March 2017 I definitelly managed to get some time and started creating Optical Flowmap Generator on my own.

Inspiration

The biggest inspiration was this dev post by Star Citizen. You can watch the whole video, but the most awesome stuff starts at 14:52! Explosion which can take several seconds instead of regular 1 or 2 sec (at 24fps)! I started to do some digging and even tried out After Effects, but I had not access to the software all the time.

Overview

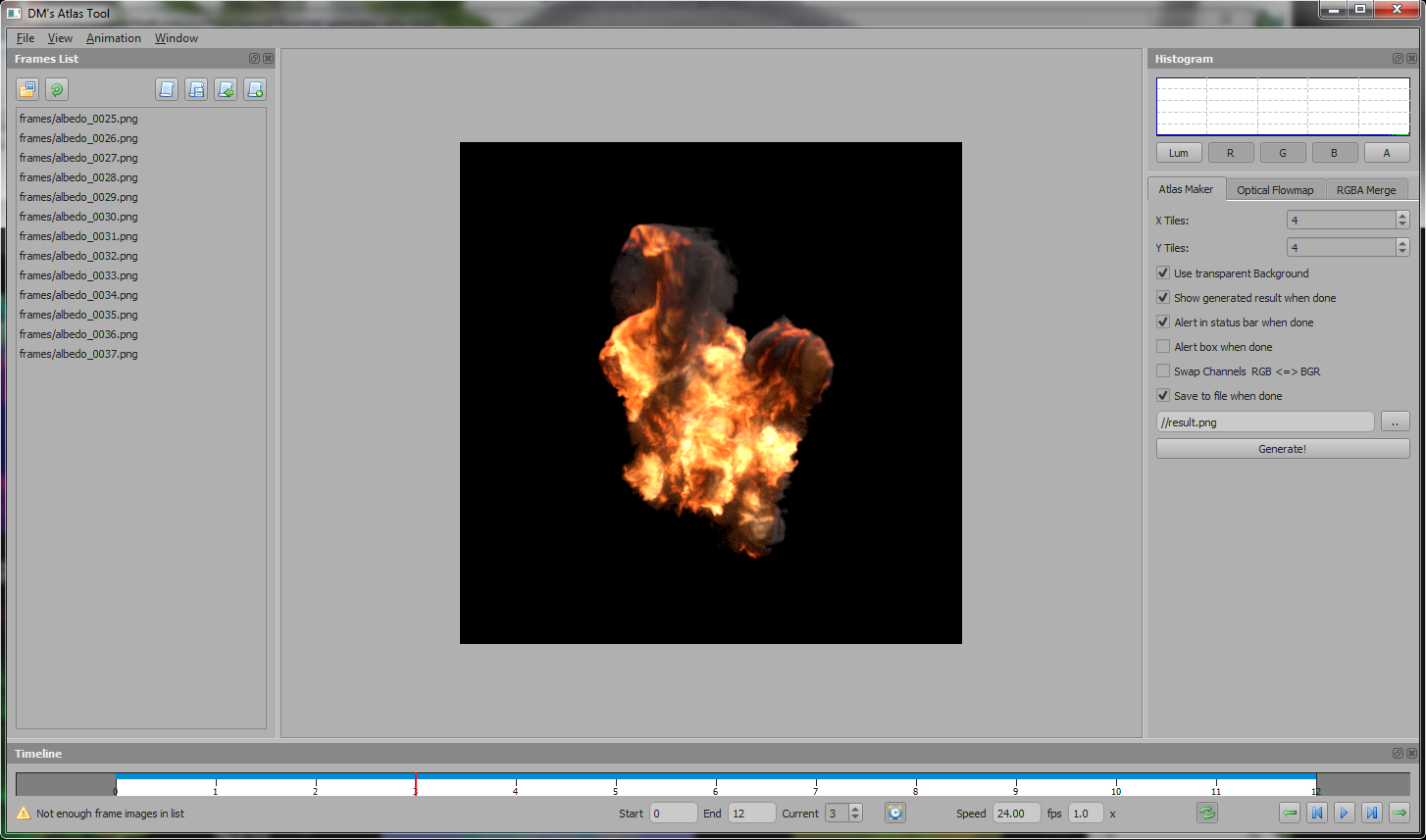

The first task was to create UI with Frames List - list of images, allow drag & drop, sorting and the usual stuff a person would expect. Then the main area - Viewport where the animation would play - allowing zoom and panning to be able to investigate the frames in detail. Of course without controling the playing of the animation, the tool wouldn't be good enough. Therefore a Timeline was created. I wanted to be able to control from which frame to start / end, set up the speed just by adjusting a muptiplayer (1.0x) or set actual fps. Also pulling the actual frame by mouse and navigate with arrow keys. I also added an histogram to checkout the values used in textures, especially for noise textures, because it's not so much work anyways..

After this was done, I started to create the tools one by one...

Tools

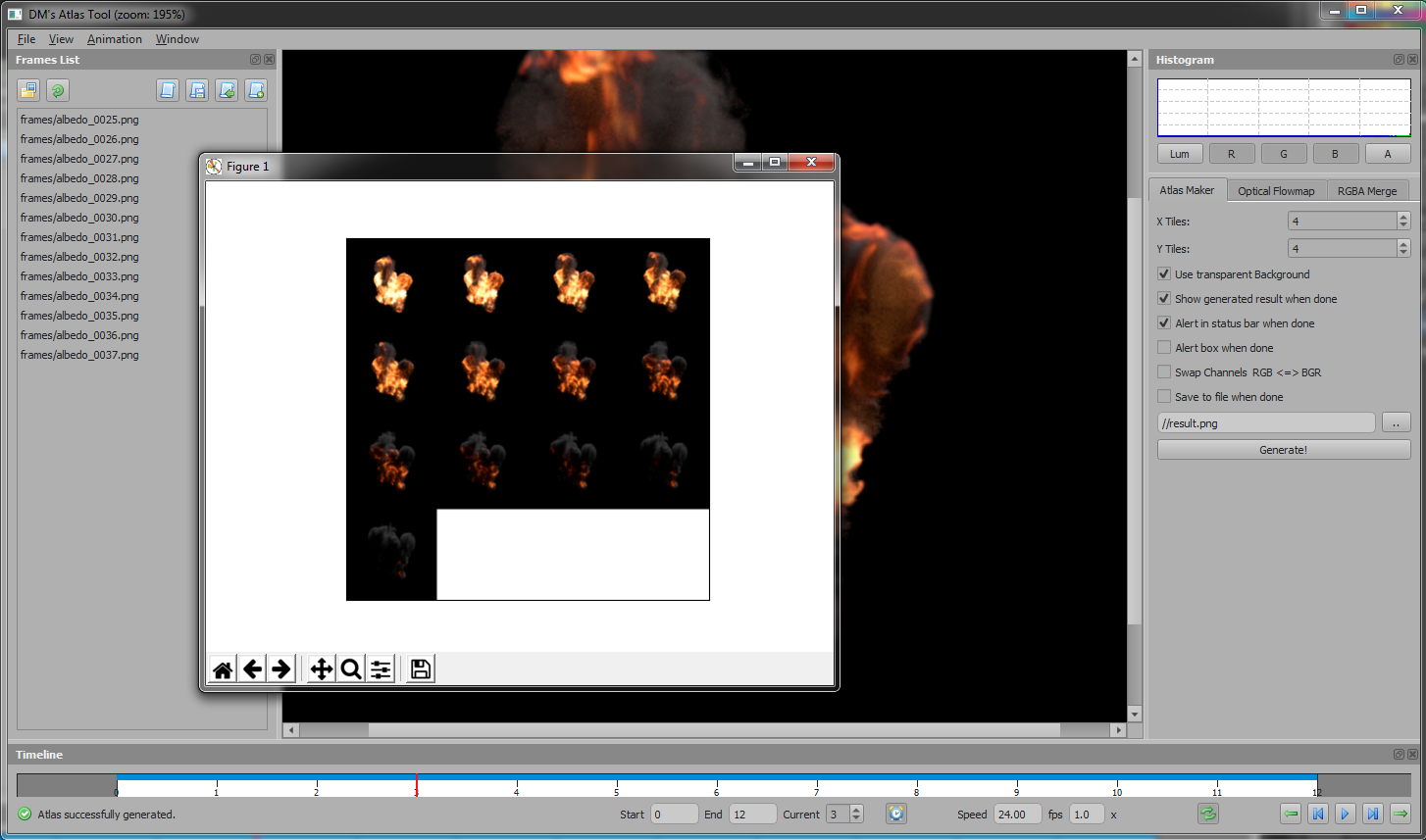

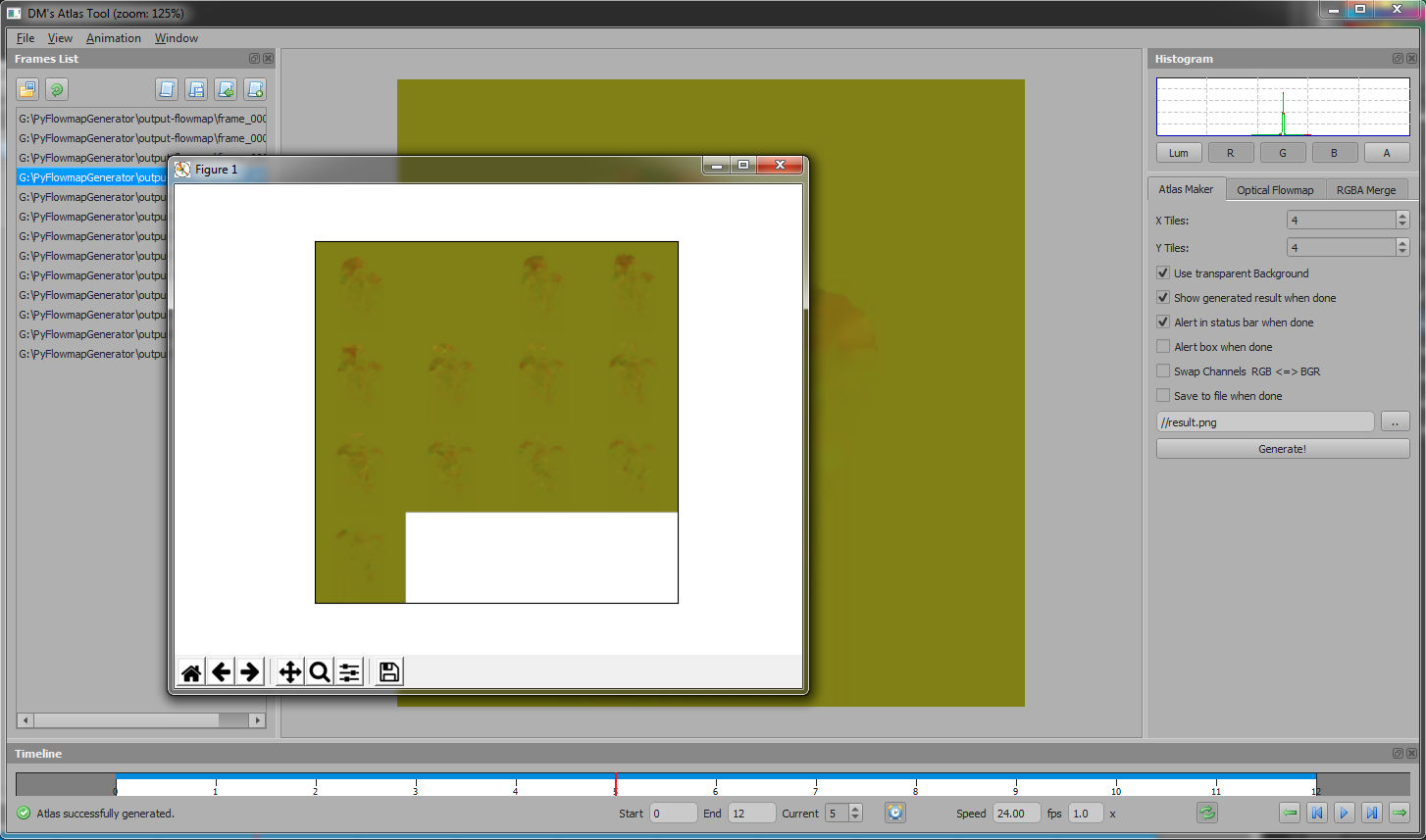

Atlas Maker

Allowing to set number of X and Y tiles on the image, swap RGB or BGR order and saving to file of course.

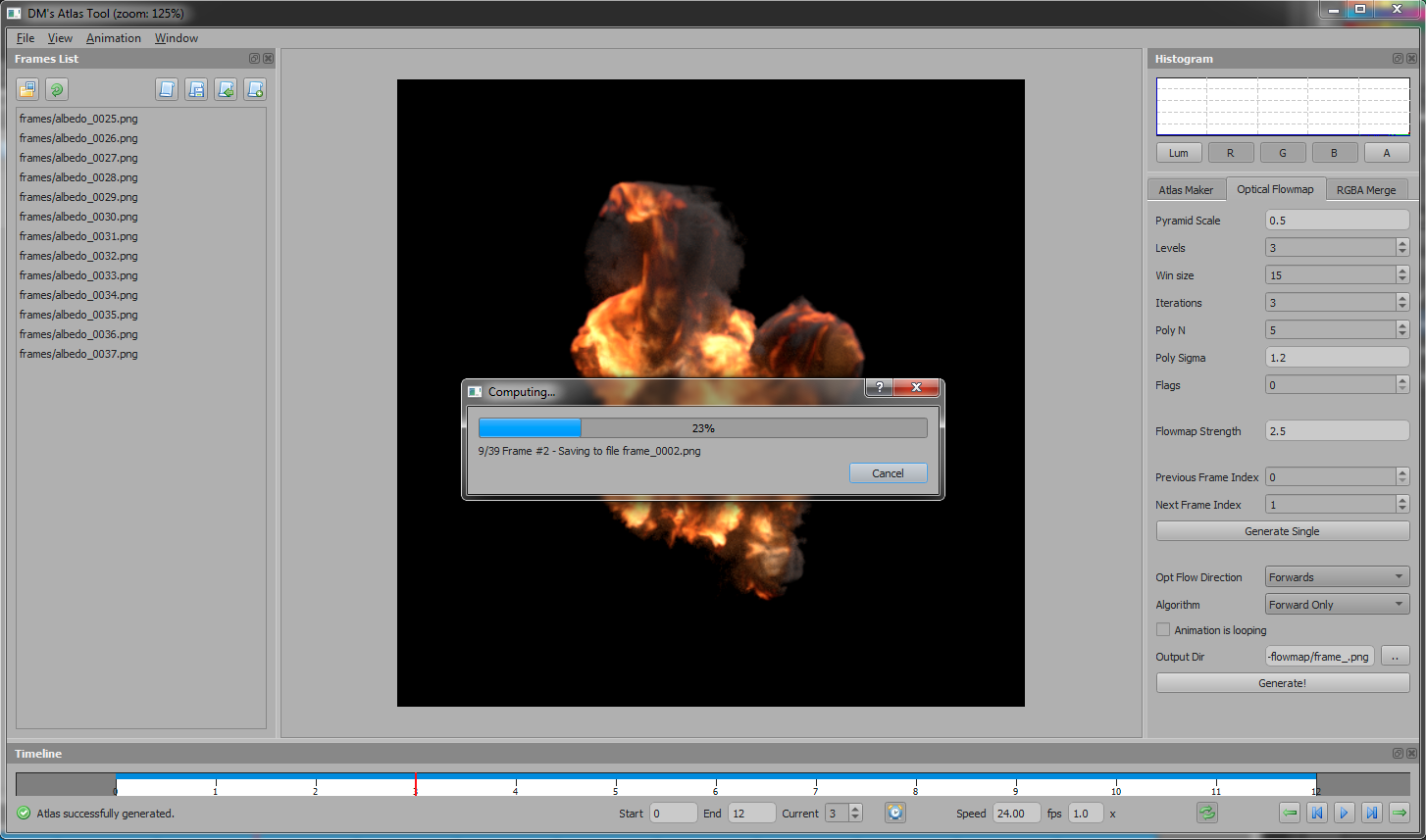

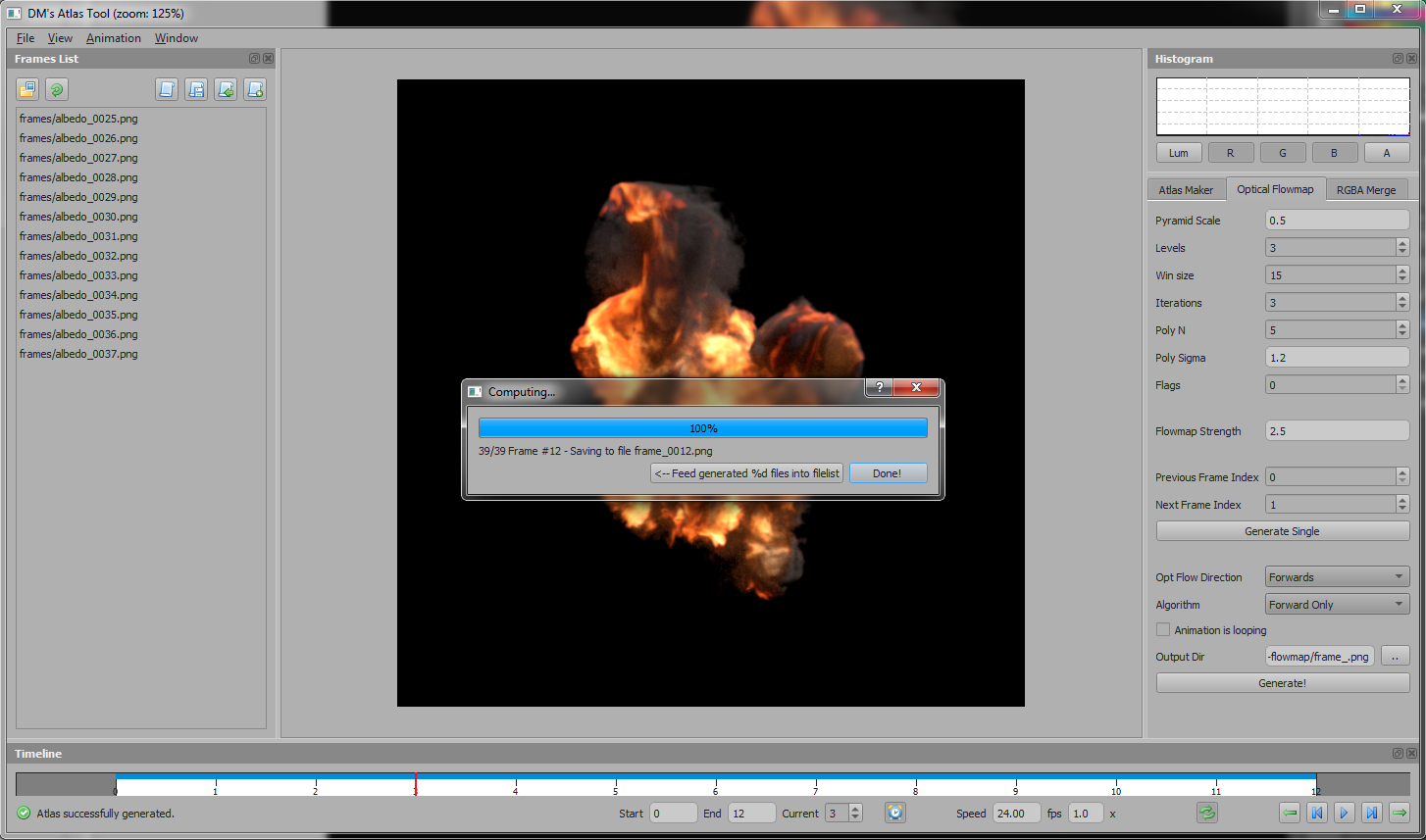

Optical Flowmap

Using OpenCV provides toolset to generate flowmaps. All of the settings are available in the tool's tab. I ended the implementation just with simple naive use of analysing to the next frame (I call it forward analysis). Also I had an idea in my mind of how to improve this process to get more precise results. However it was not implemented due lack of time.

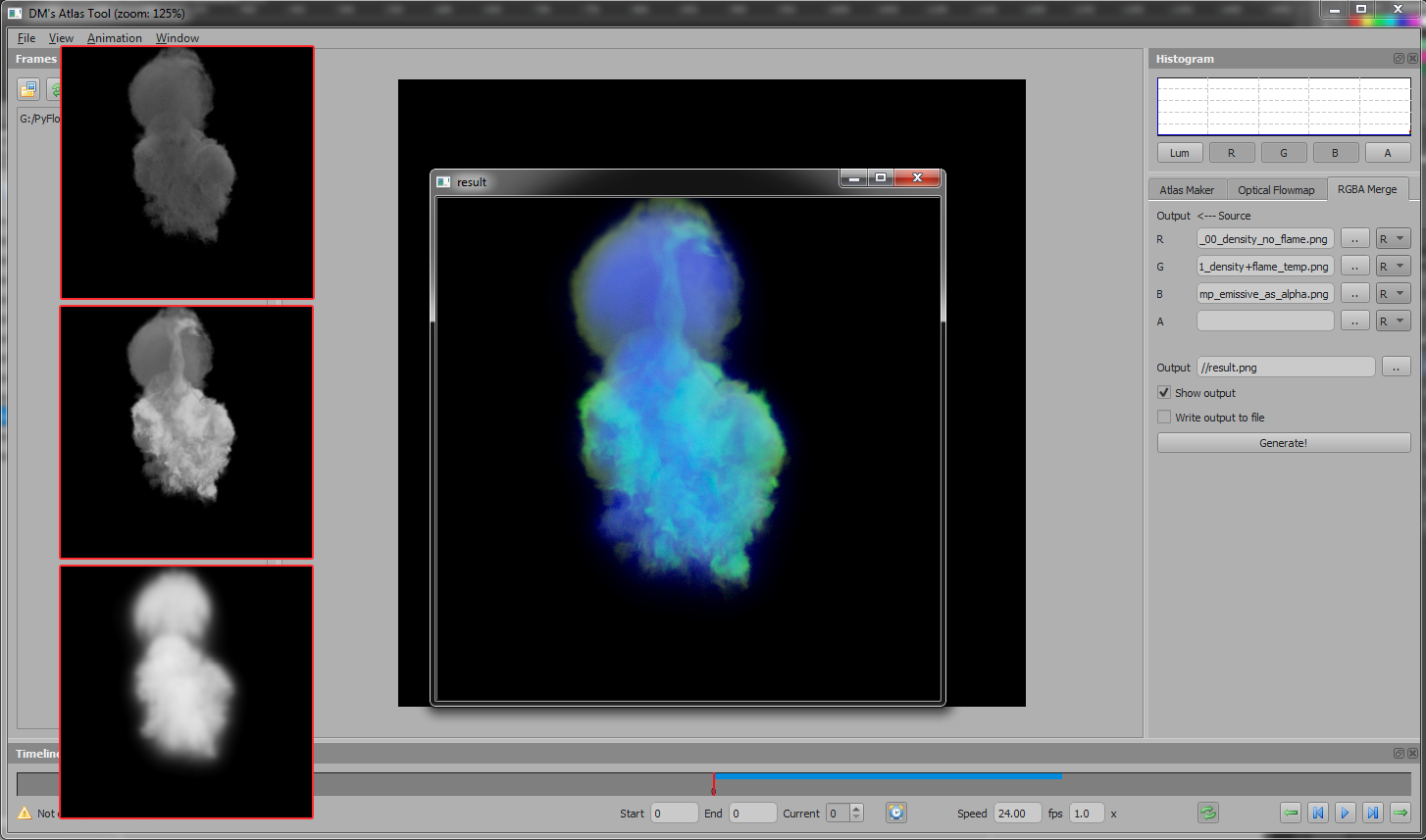

RGBA Merge

I often do need to bake textures into RGBA channels to save texture memory and didn't want to do it in Substance Designer all the time, so also this feature was added.

Screenshots

UE4 Results

After all of the work it was only a matter of time to create proper UE4 shader and start testing. This is the result I ended up with. I would say it's pretty good, but still has room for improvement. So maybe in the future :)

BlenderFreak.com

BlenderFreak is a Django powered website about Blender, game engines and a bit of programming which also serves as portfolio presentation.

Pavel Křupala currently works as Technical Artist in the game industry and has huge passion for CG, especially Blender.